How does database pessimistic locking interact with INSERT, UPDATE, and DELETE SQL statements

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

Relational database systems employ various Concurrency Control mechanisms to provide transactions with ACID property guarantees. While isolation levels are one way of choosing a given Concurrency Control mechanism, you can also use explicit locking whenever you want a finer-grained control to prevent data integrity issues.

As previously explained, there are two types of explicit locking mechanisms: pessimistic (physical) and optimistic (logical). In this post, I’m going to explain how explicit pessimistic locking interacts with non-query DML statements (e.g. insert, update, and delete).

Domain Model

For the upcoming test cases, we are going to use the following entities:

The Post entity can have one or more PostComment(s) which are associated with their parent entity through the post_id Foreign Key.

Explicit locking

Depending on the underlying database capabilities, a query can also acquire an exclusive (write) or a shared (read) lock on the database rows that are selected by the currently running statement. To see how the pessimistic locks interact with concurrent INSERT, UPDATE, and DELETE statements, we can use exclusive locks since they are better supported by most relational databases.

In the following examples, Alice is going to select all PostComment(s) belonging to a given Post entity while also acquiring an exclusive lock on the selected records. To simplify the lock acquisition, we can use the PESSIMISTIC_WRITE LockModeType offered by Java Persistence API. Therefore, Alice query looks as follows:

List<PostComment> comments = session.createQuery(

"select c " +

"from PostComment c " +

"where c.post.id = :id", PostComment.class)

.setParameter("id", 1L)

.setLockMode(LockModeType.PESSIMISTIC_WRITE)

.getResultList();

This way, Hibernate is going to generate the database-specific locking clause on our behalf.

For instance, on Oracle and MySQL, the SQL query looks as follows:

SELECT

pc.id as id1_1_,

pc.post_id as post_id3_1_,

pc.review as review2_1_

FROM post_comment pc

WHERE pc.post_id = 1

FOR UPDATE

On PostgreSQL, an additional OF alias is being employed as well:

SELECT

pc.id as id1_1_,

pc.post_id as post_id3_1_,

pc.review as review2_1_

FROM post_comment pc

WHERE pc.post_id = 1

FOR UPDATE OF pc

While on SQL Server, the syntax is a little bit different than the previous RDBMS:

SELECT

pc.id as id1_1_,

pc.post_id as post_id3_1_,

pc.review as review2_1_

FROM post_comment pc

WITH (UPDLOCK, ROWLOCK)

WHERE pc.post_id = 1

However, you don’t have to worry about all these differences since Hibernate takes care of them for you.

Update statement

Once the database records are locked, no UPDATE statement can modify them, even on a MVCC database engine.

Until Alice releases the locks by ending her transaction, Bob’s UPDATE statement is blocked, and his transaction can no longer make any progress. After Alice has committed, the locks are released, and Bob’s transaction can continue.

Delete statement

Just like the UPDATE, a DELETE statement will also be blocked by the row-level locks acquired previously by Alice’s transaction:

Once the locks are released by Alice, Bob’s transaction can continue, and the DELETE is executed.

Insert statement

While UPDATE and DELETE statements are behaving consistently on most relational database systems (e.g. MySQL, PostgreSQL), the INSERT statement behaves differently.

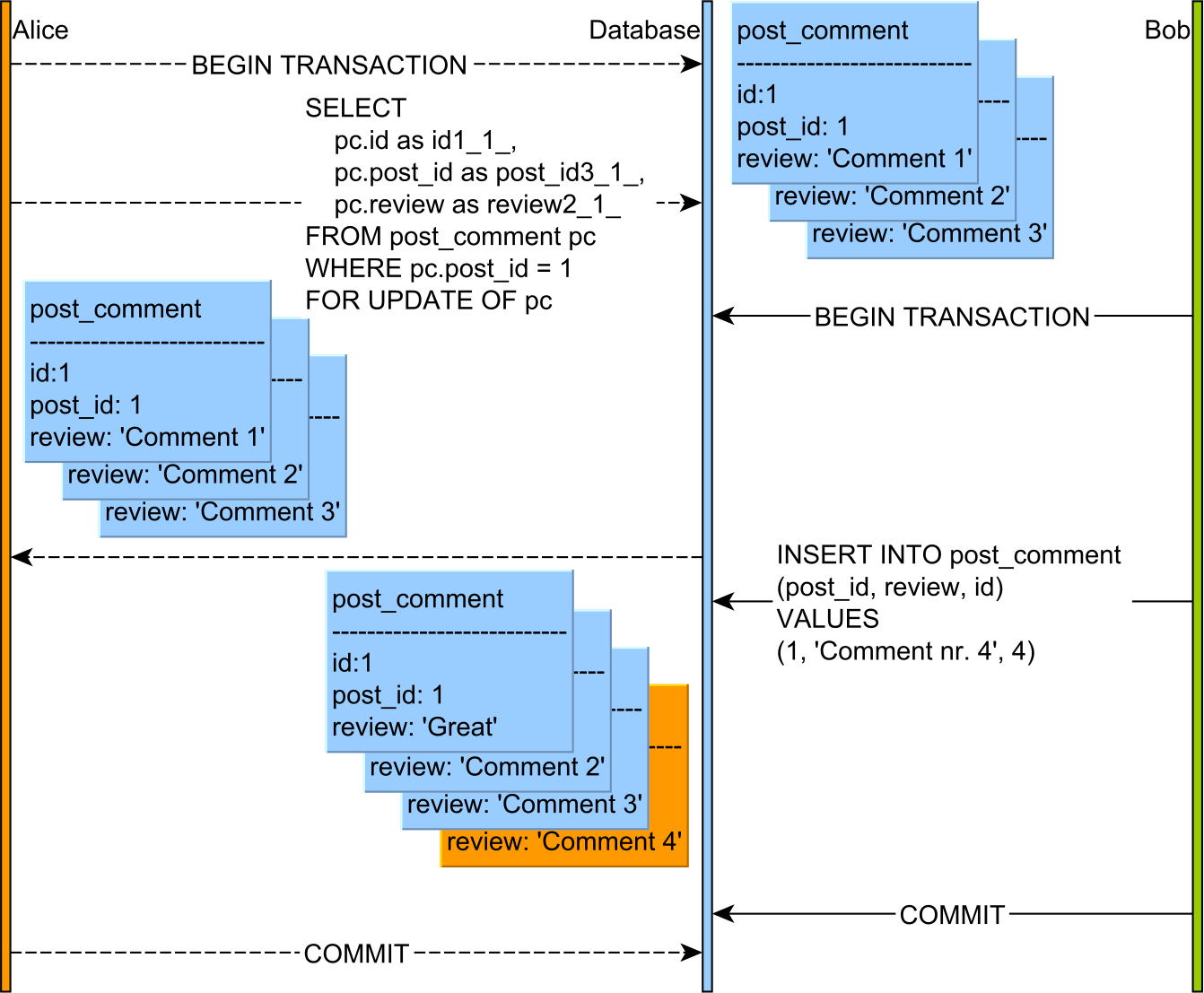

Insert statement with MySQL

On MySQL, the explicit pessimistic locking clause, not only it acquires row-level locks on each record that is selected, but it can also acquire predicate locks as well because the InnoDB clustered index supports gaps and next-key locking.

This behavior can be observed only when using the default REPEATABLE READ isolation level. When switching to READ COMMITTED, MySQL behaves like PostgreSQL. One explanation would be that locks that did not match the scan are released after query execution. For more info, read this article on Percona blog.

So, Bob’s INSERT is blocked until Alice releases the predicate lock which was acquired on all (existing and future) PostComment entries that are associated with a given parent Post record.

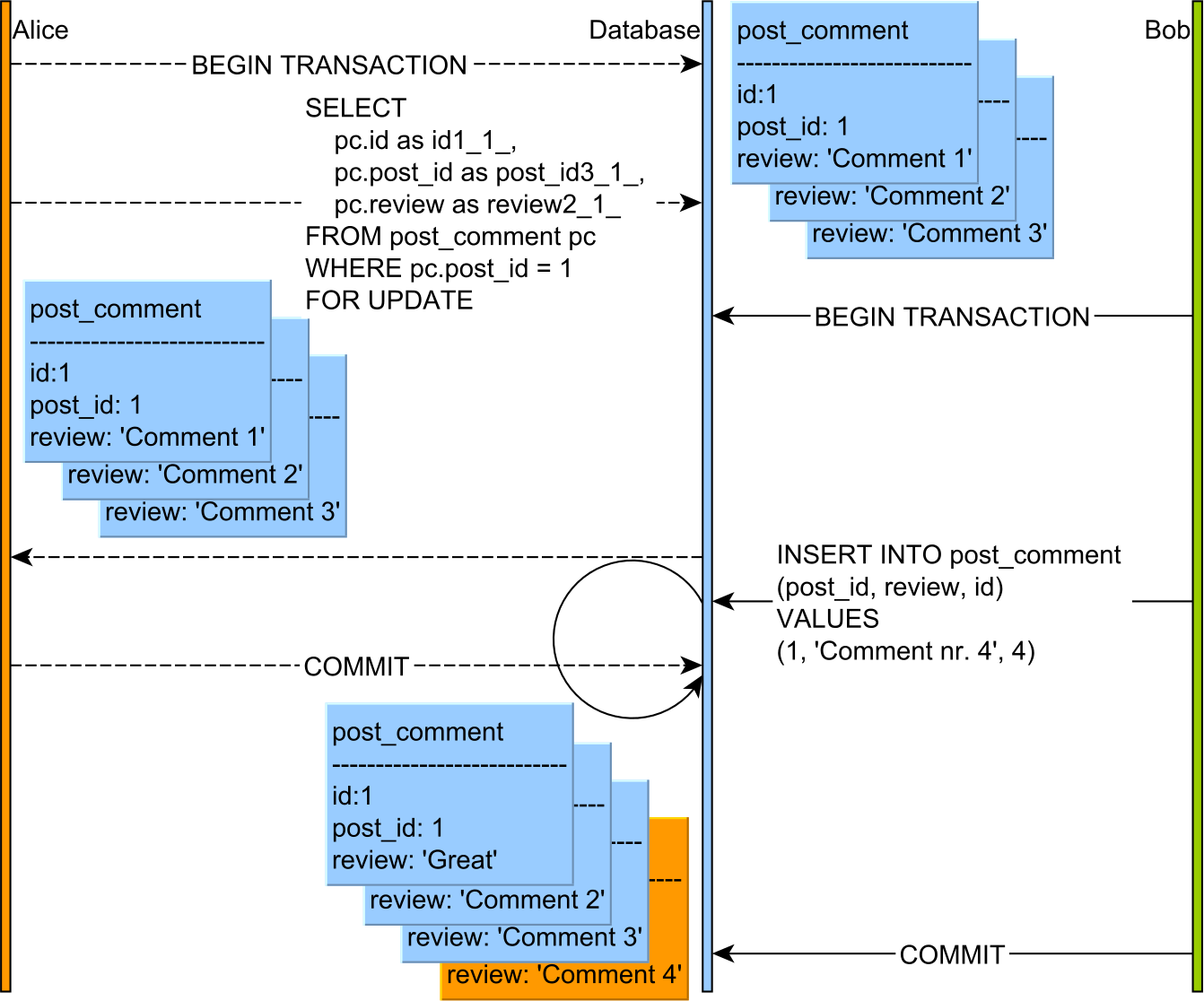

Insert statement with PostgreSQL

Unfortunately, explicit predicate locks are more an exception than a rule since most RDBMS don’t offer support for such a construct. For this reason, many relational databases behave like PostgreSQL:

Bob’s INSERT statement is executed right away even if Alice’s transaction tried to lock all PostComment entries.

If you enjoyed this article, I bet you are going to love my Book and Video Courses as well.

Conclusion

In a previous article, I wrote about the Write Skew anomaly, and how it differs between 2PL and MVCC, Now, you can draw some similarities between the Write Skew anomaly prevention and the physical predicate lock support.

Therefore, on most RDBMS, explicit physical locks can only prevent record modifications for database records that existed at the time of locking while future records can be added. MySQL is an exception to this rule, preventing INSERT statements against a range of locked entries.

So, the very same outcome you get from using Serializable in regard to Phantom Reads or Write Skews, you also get by using explicit physical locking on a lesser isolation level (e.g. Read Committed). However, just like Two-Phase Locking, explicit locking has its price, so use it judiciously.