PostgreSQL SERIAL or IDENTITY column and Hibernate IDENTITY generator

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

When using PostgreSQL, it’s tempting to use a SERIAL or BIGSERIAL column type to auto-increment Primary Keys.

PostgreSQL 10 also added support for IDENTITY, which behaves in the same way as the legacy SERIAL or BIGSERIAL type.

This article will show you that SERIAL, BIGSERIAL, and IDENTITY are not a very good idea when using JPA and Hibernate.

How to customize the Hypersistence Utils JSON Serializer

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

The Hypersistence Utils open-source project allows you to map JSON, ARRAY, and PostgreSQL ENUM types and provides a simple way of adding immutable Hibernate Types.

After adding support for customizing the Jackson ObjectMapper, the next most-wanted issue was to provide a way to customize the JSON serializing mechanism.

In this article, you are going to see how you can customize the JSON serializer used by the JSON Types.

How to customize the #JSON Serializer used by #Hibernate-Types - @vlad_mihalcea https://t.co/HD2OJJnl1S pic.twitter.com/zNBslsftIt

— Java (@java) March 31, 2018

How to use a JVM or database auto-generated UUID identifier with JPA and Hibernate

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

In this article, we are going to see how to use a UUID entity identifier that is auto-generated by Hibernate either in the JVM or using database-specific UUID functions.

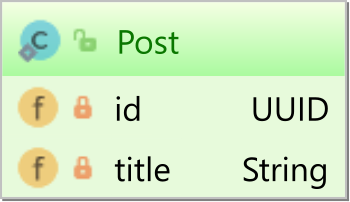

Our Post entity looks as follows:

The Post entity has a UUID identifier and a title. Now, let’s see how we can map the Post entity so that the UUID identifier be auto-generated for us.

How to use database-specific or Hibernate-specific features without sacrificing portability

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

Like other non-functional requirements, portability is a feature. While portability is very important when working on an open-source framework that will be used in a large number of setups, for end systems, portability might not be needed at all.

This article aims to explain that you don’t have to avoid database or framework-specific features if you want to achieve portability.

How to customize the Hypersistence Utils Jackson ObjectMapper

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

The Hypersistence Utils open-source project allows you to map JSON, and ARRAY when using JPA and Hibernate.

Since I launched this project, one of the most wanted features was to add support for customizing the underlying Jackson ObjectMapper, and since version 2.1.1, this can be done either declaratively or programmatically.

In this article, you are going to see how to customize the ObjectMapper when using the Hypersistence Utils project.

How to customize the Jackson ObjectMapper used by #Hibernate-Types - @vlad_mihalceahttps://t.co/nF1CcLVL7I pic.twitter.com/lBIMsw0Hh7

— Java (@java) March 10, 2018

How to get the actual execution plan for an Oracle SQL query using Hibernate query hints

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

While answering this question on the Hibernate forum, I realized that it’s a good idea to write an article about getting the actual execution plan for an Oracle SQL query using Hibernate query hints feature.

How to map a JPA entity to a View or SQL query using Hibernate

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

In this article, you are going to learn how to map a JPA entity to the ResultSet of a SQL query using the @Subselect Hibernate-specific annotation.

How to cache non-existing entity fetch results with JPA and Hibernate

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

Sergey Chupov asked me a very good question on Twitter:

https://twitter.com/scadgek/status/946838214744203269

In this article, I’m going to show you how to cache null results when using JPA and Hibernate.

JDBC Driver Connection URL strings

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

Ever wanted to connect to a relational database using Java and didn’t know the URL connection string?

Then, this article is surely going to help you from now on.

Connect to a relational database using #Java with JDBC Driver Connection URL strings - @vlad_mihalcea https://t.co/vdCpoWvrIE pic.twitter.com/hy2OuQrYkC

— Java (@java) February 15, 2018

How to use the Hibernate Query Cache for DTO projections

Are you struggling with performance issues in your Spring, Jakarta EE, or Java EE application?

Imagine having a tool that could automatically detect performance issues in your JPA and Hibernate data access layer long before pushing a problematic change into production!

With the widespread adoption of AI agents generating code in a heartbeat, having such a tool that can watch your back and prevent performance issues during development, long before they affect production systems, can save your company a lot of money and make you a hero!

Hypersistence Optimizer is that tool, and it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, Micronaut, or Play Framework.

So, rather than allowing performance issues to annoy your customers, you are better off preventing those issues using Hypersistence Optimizer and enjoying spending your time on the things that you love!

Introduction

On the Hibernate forum, I noticed the following question which is about using the Hibernate Query Cache for storing DTO projections, not entities.

While caching JPQL queries which select entities is rather typical, caching DTO projections is a lesser-known feature of the Hibernate second-level Query Cache.