The best way to lazy load entity attributes using JPA and Hibernate

Imagine having a tool that can automatically detect JPA and Hibernate performance issues. Wouldn’t that be just awesome?

Well, Hypersistence Optimizer is that tool! And it works with Spring Boot, Spring Framework, Jakarta EE, Java EE, Quarkus, or Play Framework.

So, enjoy spending your time on the things you love rather than fixing performance issues in your production system on a Saturday night!

Introduction

When fetching an entity, all attributes are going to be loaded as well. This is because every entity attribute is implicitly marked with the @Basic annotation whose default fetch policy is FetchType.EAGER.

However, the attribute fetch strategy can be set to FetchType.LAZY, in which case the entity attribute is loaded with a secondary select statement upon being accessed for the first time.

@Basic(fetch = FetchType.LAZY)

This configuration alone is not sufficient because Hibernate requires bytecode instrumentation to intercept the attribute access request and issue the secondary select statement on demand.

Bytecode enhancement

When using the Maven bytecode enhancement plugin, the enableLazyInitialization configuration property must be set to true as illustrated in the following example:

<plugin>

<groupId>org.hibernate.orm.tooling</groupId>

<artifactId>hibernate-enhance-maven-plugin</artifactId>

<version>${hibernate.version}</version>

<executions>

<execution>

<configuration>

<failOnError>true</failOnError>

<enableLazyInitialization>true</enableLazyInitialization>

</configuration>

<goals>

<goal>enhance</goal>

</goals>

</execution>

</executions>

</plugin>

With this configuration in place, all JPA entity classes are going to be instrumented with lazy attribute fetching. This process takes place at build time, right after entity classes are compiled from their associated source files.

The attribute lazy fetching mechanism is very useful when dealing with column types that store large amounts of data (e.g. BLOB, CLOB, VARBINARY). This way, the entity can be fetched without automatically loading data from the underlying large column types, therefore improving performance.

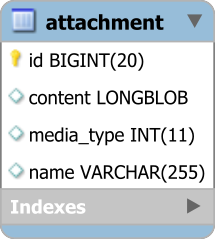

To demonstrate how attribute lazy fetching works, the following example is going to use an Attachment entity which can store any media type (e.g. PNG, PDF, MPEG).

@Entity @Table(name = "attachment")

public class Attachment {

@Id

@GeneratedValue

private Long id;

private String name;

@Enumerated

@Column(name = "media_type")

private MediaType mediaType;

@Lob

@Basic(fetch = FetchType.LAZY)

private byte[] content;

//Getters and setters omitted for brevity

}

Properties such as the entity identifier, the name or the media type are to be fetched eagerly on every entity load. On the other hand, the media file content should be fetched lazily, only when being accessed by the application code.

After the Attachment entity is instrumented, the class bytecode is changed as follows:

@Transient

private transient PersistentAttributeInterceptor

$$_hibernate_attributeInterceptor;

public byte[] getContent() {

return $$_hibernate_read_content();

}

public byte[] $$_hibernate_read_content() {

if ($$_hibernate_attributeInterceptor != null) {

this.content = ((byte[])

$$_hibernate_attributeInterceptor.readObject(

this, "content", this.content));

}

return this.content;

}

The content attribute fetching is done by the PersistentAttributeInterceptor object reference, therefore providing a way to load the underlying BLOB column only when the getter is called for the first time.

When executing the following test case:

Attachment book = entityManager.find(

Attachment.class, bookId);

LOGGER.debug("Fetched book: {}", book.getName());

assertArrayEquals(

Files.readAllBytes(bookFilePath),

book.getContent()

);

Hibernate generates the following SQL queries:

SELECT a.id AS id1_0_0_,

a.media_type AS media_ty3_0_0_,

a.name AS name4_0_0_

FROM attachment a

WHERE a.id = 1

-- Fetched book: High-Performance Java Persistence

SELECT a.content AS content2_0_

FROM attachment a

WHERE a.id = 1

Because it is marked with the FetchType.LAZY annotation and lazy fetching bytecode enhancement is enabled, the content column is not fetched along with all the other columns that initialize the Attachment entity. Only when the data access layer tries to access the content property, Hibernate issues a secondary select to load this attribute as well.

Just like FetchType.LAZY associations, this technique is prone to N+1 query problems, so caution is advised. One slight disadvantage of the bytecode enhancement mechanism is that all entity properties, not just the ones marked with the FetchType.LAZY annotation, are going to be transformed, as previously illustrated.

Fetching subentities

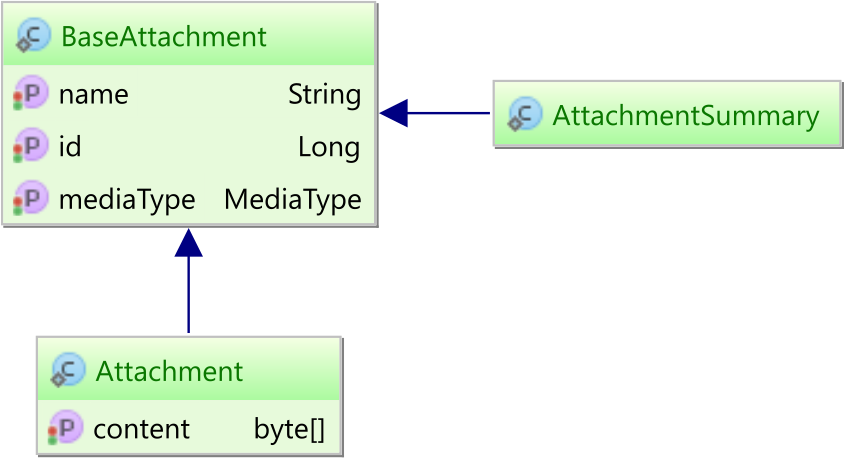

Another approach to avoid loading table columns that are rather large is to map multiple subentities to the same database table.

Both the Attachment entity and the AttachmentSummary subentity inherit all common attributes from a BaseAttachment superclass.

@MappedSuperclass

public class BaseAttachment {

@Id

@GeneratedValue

private Long id;

private String name;

@Enumerated

@Column(name = "media_type")

private MediaType mediaType;

//Getters and setters omitted for brevity

}

While AttachmentSummary extends BaseAttachment without declaring any new attribute:

@Entity @Table(name = "attachment")

public class AttachmentSummary

extends BaseAttachment {}

The Attachment entity inherits all the base attributes from the BaseAttachment superclass and maps the content column as well.

@Entity @Table(name = "attachment")

public class Attachment

extends BaseAttachment {

@Lob

private byte[] content;

//Getters and setters omitted for brevity

}

When fetching the AttachmentSummary subentity:

AttachmentSummary bookSummary = entityManager.find(

AttachmentSummary.class, bookId);

The generated SQL statement is not going to fetch the content column:

SELECT a.id as id1_0_0_,

a.media_type as media_ty2_0_0_,

a.name as name3_0_0_

FROM attachment a

WHERE a.id = 1

However, when fetching the Attachment entity:

Attachment book = entityManager.find(

Attachment.class, bookId);

Hibernate is going to fetch all columns from the underlying database table:

SELECT a.id as id1_0_0_,

a.media_type as media_ty2_0_0_,

a.name as name3_0_0_,

a.content as content4_0_0_

FROM attachment a

WHERE a.id = 1

I'm running an online workshop on the 20-21 and 23-24 of November about High-Performance Java Persistence.

If you enjoyed this article, I bet you are going to love my Book and Video Courses as well.

Conclusion

To lazy fetch entity attributes, you can either use bytecode enhancement or subentities. Although bytecode instrumentation allows you to use only one entity per table, subentities are more flexible and can even deliver better performance since they don’t involve an interceptor call whenever reading an entity attribute.

When it comes to reading data, subentities are very similar to DTO projections. However, unlike DTO projections, subentities can track state changes and propagate them to the database.

I have a entity with 3 CLOB’s and I wanted to make them lazily load. I used this technique except loading any of the 3 causes all of them to load. How can I prevent that? This has a huge performance impact because the one I’m reading is usually null but the others are large.

I could investigate a solution via consulting if you’re interested.